Mobile AR

Al Wasl Dome, Dubai - 2024

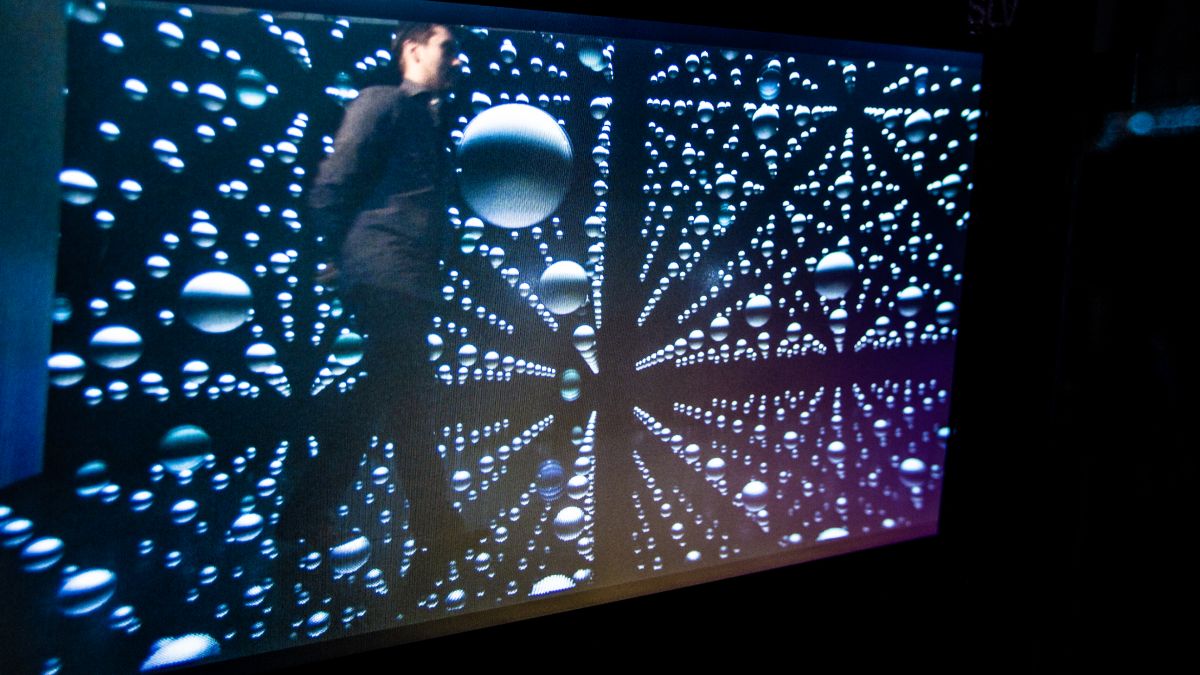

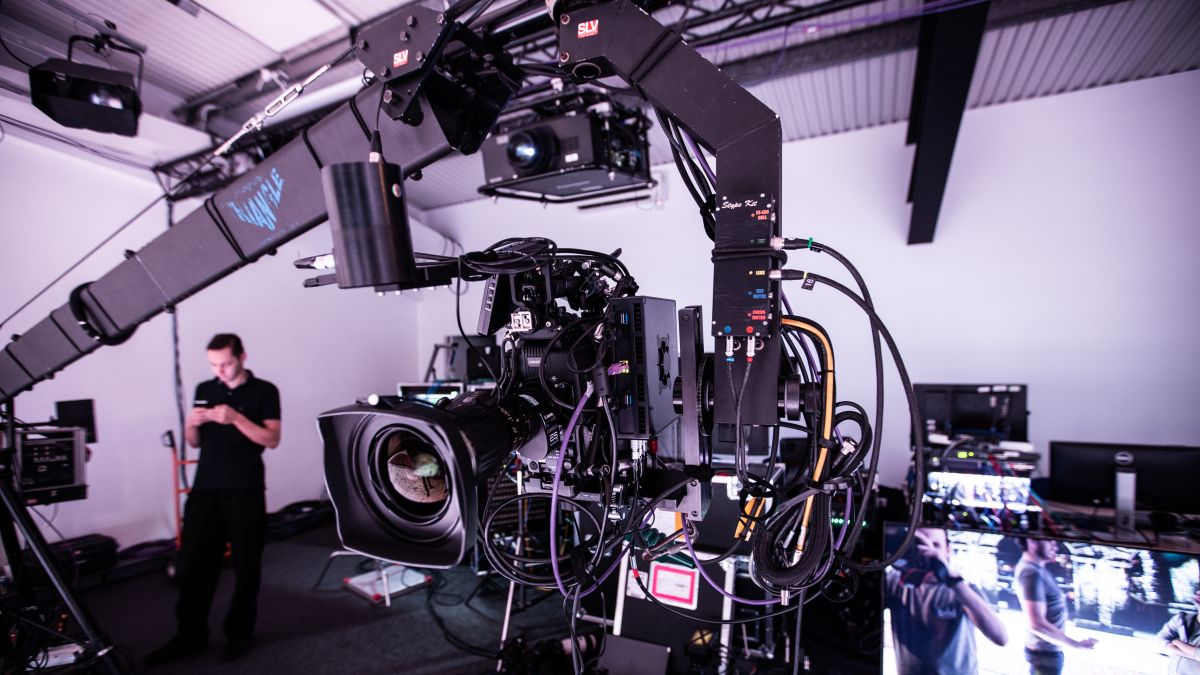

Augmented Reality for Live Productions

2018, London

As a continuation of our first research demo, "Live Stage Augmented Reality" created by Bild in 2017, we continue to explore workflows for creating Augmented Reality (AR) content, applicable for a wide range of applications and productions.

The workflow and graphics presented here are completely run in realtime; scenes instantly react to the movements of tracked cameras, people and objects, and allows for creative changes on-the-fly.

Using this approach AR graphics can be rendered and displayed on physical LED screens, onto the broadcast feed only, and/or composited together - allowing 3d content to appear both in front and behind a performer. In addition, by also tracking performers in 3d space they can actively interact with the augmented graphics. 3d content, augmented products or lights can for example be attached to a person and appear to be perfectly real in the broadcast output.

The workflow and graphics presented here are completely run in realtime; scenes instantly react to the movements of tracked cameras, people and objects, and allows for creative changes on-the-fly.

Using this approach AR graphics can be rendered and displayed on physical LED screens, onto the broadcast feed only, and/or composited together - allowing 3d content to appear both in front and behind a performer. In addition, by also tracking performers in 3d space they can actively interact with the augmented graphics. 3d content, augmented products or lights can for example be attached to a person and appear to be perfectly real in the broadcast output.

Credits

Produced by: Bild Studios

Technology Partner & Facilitator: CT London

With great support from:

Realtime graphics software: Notch

Performer tracking: Blacktrax

Mediaserver & Integration: disguise

Camera tracking: Stype

Produced by: Bild Studios

Technology Partner & Facilitator: CT London

With great support from:

Realtime graphics software: Notch

Performer tracking: Blacktrax

Mediaserver & Integration: disguise

Camera tracking: Stype